Know your data 47: AI solves missing connections (or not)

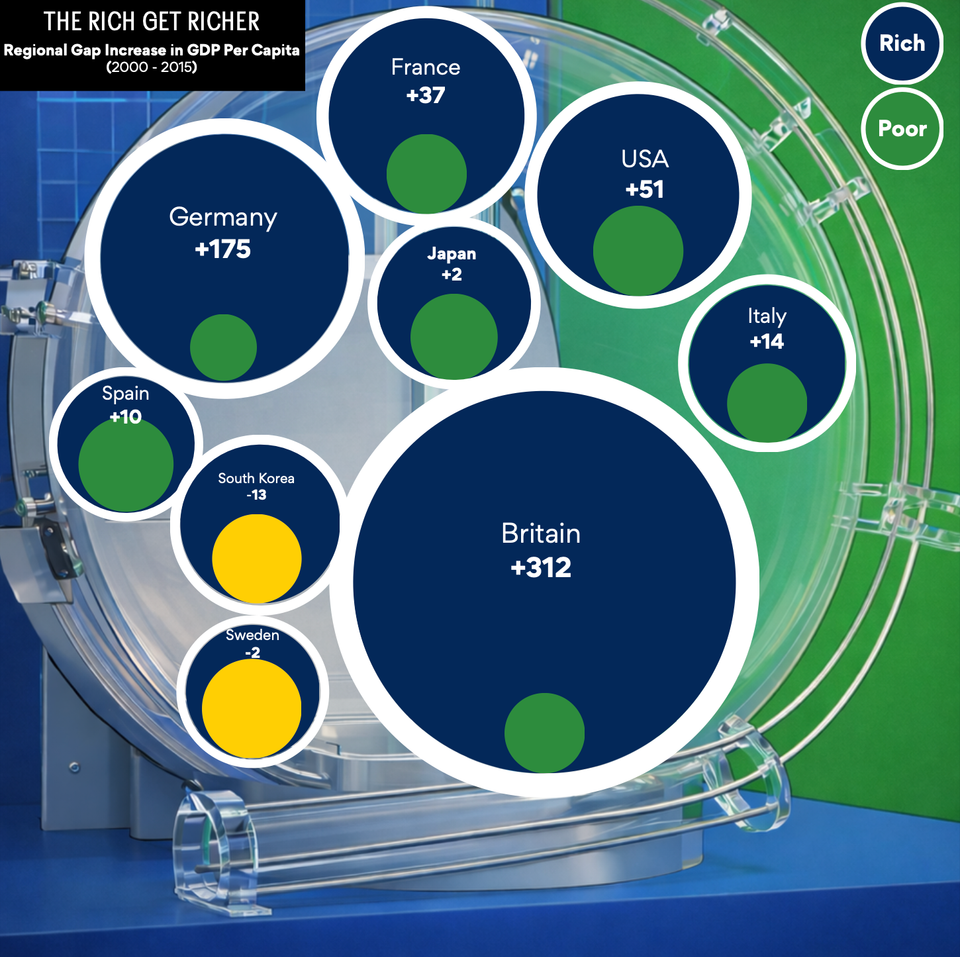

AI will connect you to someone far far away

A sob story (link) about a grandmother in Tennessee raises a number of issues related to use of AI tools by law enforcement.

As reported, Angela Lipps was falsely accused of bank fraud near Fargo, North Dakota, a crime she did not commit. She was charged in ND, arrested and sat in jail in Tennessee, extradited to ND, and ultimately the charges were dismissed, and she was released after five months 🥲.

Lipps’s suffering was due to "misidentification". Her lawyer was able to produce bank records that proved that she was in Tennessee at the time of the crime. Tennessee is over 1,000 miles (1,600 km) away from North Dakota. In fact, according to Lipps, she doesn’t travel, has never even boarded an airplane, let alone ever went to North Dakota!

The situation ultimately got traced to the notorious face surveillance company, Clearview AI. This private company makes a business out of scraping images from social media and online sources, building a gigantic database that is used to “doxx” people.

Let’s dissect that bit by bit.

I deliberately use the word “doxx”. Doxxing is usually associated with someone publishing someone else’s personally identifiable information (PII, such as names and addresses) without that person’s consent, usually for the purpose of shaming, revenge, etc. According to Wiki (link), the U.S. have weak regulations in this area; only a few states consider doxxing illegal.

The contempt for doxxing appears to stop at the corporate door. Clearview’s raison d'être is doxxing on steroids. Its entire business is tagging images with people’s names (which naturally leads to other PII data, given the motivation of its customers). Instead of putting it up on social media, like a political activist might do, Clearview sells the information to someone willing to pay, also without that person’s consent, and worse, behind that person’s back. Government agencies are its primary customers.

According to the Wiki page, Clearview settled a lawsuit in 2022, agreeing not to sell to “private individuals and businesses.” But the linked CNN article used the qualified phrase, “most companies in the United States”. The Lipps case obviously shows that government use of such a tool is not harmless.

The other key word is “scraping,” about which I wrote recently (here). Clearview is engaged in large-scale harvesting of images across multiple platforms; that is their central value proposition. For this business, they need as many images as possible, as recent as possible. Have you heard a peep from Facebook/Instagram, Twitter, Google, etc. about Clearview illicitly taking images from their properties? Neither have I. Years ago when the New York Times covered this company, they made some noise but there have been no lawsuits or enforcement actions that I’m aware of.

The Lipps case is highly instructive, showing us how surveillance data can sometimes harm people.

According to the police department that used Clearview to identify Lipps as the criminal, the true criminal had used Lipps’s picture on a fake ID. How did the true fraudster have access to Lipps’s picture? Most likely from social-media scraping!

Misidentification is evidently a misnomer. If we believe the police’s story, then Clearview correctly identified Lipps from the fake ID photo. The problem appears to be that they ran with that, without looking for collaborative evidence. A surveillance image actually existed that would have exonerated Lipps if it were inspected.

The Lipps case also shines a light on the gray legal area in which these law enforcement agencies work. The Fargo jurisdiction did not have any AI for facial recognition; it then asked neighboring West Fargo police to help out, because they have Clearview. What’s to stop Clearview users from doxxing someone for non-official matters?

(By the way, Clearview’s sales team is probably knocking on the doors of the two police departments – because they have learned that users are sharing their Netflix accounts, so to speak.)

The proposed solution by the Fargo police is to route such identification requests to the North Dakota State and Local Intelligence Center, which has specific expertise in AI tools.

When I read that, I said to myself, I bet NDSLIC also uses Clearview, which would not have made a difference in the Lipps case. A quick search confirmed it. The West Fargo police chief defended his department (link), saying exactly that: “[we] did send it to NDSLIC, which returned the exact same results using the identical Clearview AI software.”

In the U.S., there is so much involvement by private entities in these aspects of law enforcement that it becomes very hard to figure out if proper and legal process has been applied.

Undoubtedly, facial recognition technology has solved, and will continue to help solve, crimes. But just as surely, such technologies, under various, sometimes unexpected, circumstances, will result in innocent people being “harrassed,” and in Lipps’s case, thrown in jail for months. Where do we draw the line?

P.S. [4/1/2026] Other posts in the Know Your Data series are here.