Instacart bows to pressure

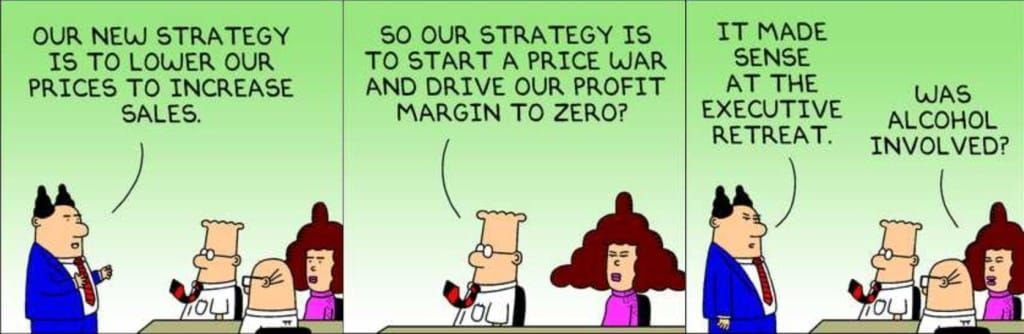

What is the point of pricing tests if management isn't intending to change prices?

The public reaction to the Consumer Reports study on Instacart's pricing strategies has forced the company to "end all item price tests," which is part of their Eversight offering. (See also my previous post about the study.)

Within days of the CR report, Instacart initially disputed some details of the study (link), but eventually announced the end of these AI pricing experiments (link).

These press releases provide a few more insights about what was happening.

Instacart says retailers already charge different prices at different physical stores for the same item. The revised policy does not prohibit online retailers from charging different prices for the same item based on IP addresses (or phone numbers or other ways to geo-locate shoppers). This itself is an intriguing admission. The Internet is supposed to "flatten" the world, bringing everyone closer together but is that more hype than reality? The implication of retailers duplicating brick-and-mortar practices online is an admission that the online presence (which in theory can be launch once for every location) has not altered location-driven economics, if we believe what they're saying.

Retail partners who feature on Instacart can subscribe to a pricing tool called "Eversight." Instacart purchased this capability from a startup called Eversight Labs in 2022.

Eversight marketing materials said the platform is designed for retailers to run "millions of tests" "all the time". Instacart claimed the pricing experiments only "10 of its retail partners" use Eversight, according to CR. Instacart suggested that using Eversight could increase sales by 1-3 percent, and margins by 2-5 percent. Lets think about how that may be possible.

We first entertain a traditional test-and-learn setting, in which the price experiment involves a randomly selected subset of shoppers, and is turned on for a short period of time to collect enough samples for a statistical read of the result.

The objective of the pricing experiment is to determine the "optimal" price for an item, attained by measuring customers' price elasticity. The expected outcome of the experiment is a price adjustment; the revised price is both fixed and universal (for the population specified in the test). If the outcome is a price hike, the test result must have predicted that the loss of sales due to the higher price is more than offset by the additional revenues generated by the price hike from customers undeterred by it.

As discussed in my previous post, the net improvement in revenues has to be quite large to justify trading away the comfort of inertia. For this reason, the outcome is much more likely to be a price increase than a price decrease. This behavior I think is a type of endowment effect of interest to behavioral psychologists.

If the expected outcome is a price increase (or no change), it follows that the set of test price levels looks more like [base, +2%, +3%] than [-2%, base, +2%]. That's why I suggested computing the average displayed price as a way of learning whether the pricing experiment effectively raised prices.

Instacart's primary pushback on the CR study is that Eversight offers "testing," suggesting that these are temporary price changes that disappear after the tests are over. This defence makes no sense for a number of reasons.

If the retailer has no intention of changing prices, why conduct pricing tests?

If indeed list prices remain the same post-test, then the incremental revenues marketed by Eversight would have been achieved during the period of testing. Further, if the price changes were purely randomly applied, as Instacart asserted, then the set of test price levels is likely skewed toward price hikes and not price decreases.

Assuming the retailer found out from the price testing that it makes more money by raising prices by 5%, why would they not roll out the price hike?

Finally, consider the possibility that a retailer is always running price tests. It gives a new meaning to "testing."

Additionally, it is a fallacy to think that if the test price levels are symmetric, e.g. [-2%, base, +2%], then the experiment does not alter the status quo. This is a subtle point.

The no-change scenario only materializes if the tested prices do not affect consumer behavior. For example, test prices of [-1 cent, base, +1 cent] most likely result in all three test subgroups exhibiting the same buying propensity. This is of course a silly test.

A more probable outcome is transactions shift in inversely proportion to the price changes. The +2% subgroup buys fewer units while the -2% subgroup gets more units. The price elasticity may be nonlinear, in which case the total revenues obtained during the test may be higher, or lower, than the pre-test amount.

Running tests "all the time" only makes sense if the vendor is confident that these tests in aggregate improves business outcomes. This setting is incompatible with the idea of an unbiased test with symmetric price levels. If management has such a crystal ball, they should just implement the price changes, without a need to run tests!